As NASA’s Artemis II mission readies for its historic crewed test flight around the Moon, it’s worth pausing to marvel not just at the drama of space exploration, but at the quiet revolution that made it possible: computing power.

For decades, people have repeated the saying, “Your smartphone is more powerful than the computer that sent astronauts to the Moon in 1969.” It sounds like hyperbole, but it’s absolutely true. Let’s unpack what that really means, and how the astonishing march of transistor technology brought us from room-sized mainframes to the pocket-sized processors now helping steer humanity back toward the lunar surface.

The Computer That Took Us to the Moon

When Neil Armstrong and Buzz Aldrin landed on the lunar surface in 1969, they were guided by a marvel of its time: the Apollo Guidance Computer (AGC). Built by MIT, it was one of the first digital computers to use integrated circuits. By today’s standards, its specifications are almost laughable.

- Processor Speed: About 1 MHz (one million cycles per second)

- Memory: Just 64 KB total — half of what a short email draft uses

- Transistors: Roughly 5,600 integrated circuits

That’s not a typo. The AGC’s computational muscle was roughly equivalent to a 1970s pocket calculator. Yet, it guided astronauts across a quarter-million miles of space with deadly precision.

Its success wasn’t just about hardware capacity. It was the triumph of human ingenuity, engineers who optimized every line of code because they had to. Every bit counted. Quite literally.

Among those engineers was computer scientist Margaret Hamilton, who led the software development team at MIT Instrumentation Laboratory. Hamilton coined the very term “software engineering” and developed the onboard flight software that rescued Apollo 11 from a nearly mission-ending overload alarm during landing. Her pioneering work not only made the Moon landing possible but also helped define the foundation of modern software reliability — principles still guiding systems like Artemis II today.

The Smartphone in Your Hand

Fast forward to today. A modern smartphone (say, an iPhone 16 or Google Pixel 8) contains around 15 billion transistors and runs at speeds measured in gigahertz (billions of cycles per second). Compared to the Apollo Guidance Computer:

- Your phone is roughly one million times more powerful in raw processing performance.

- It has about 100 million times more memory.

- It performs multitasking operations the Apollo computer could never even imagine.

Put differently: if the AGC were a tricycle, your smartphone would be a Formula 1 car — compact, elegant, and bordering on impossible by 1960s standards.

The Transistor: The Billion-Times Leap

At the heart of this exponential growth is a tiny component that quietly changed the world — the transistor.

Invented in 1947, transistors act as miniature electrical switches, controlling the flow of electrons that represent the binary “1”s and “0”s of digital logic. Early computers used vacuum tubes, each the size of a thumb and prone to burning out. Then came transistors — first as bulky metal cans, then microscopic silicon gates etched by laser precision.

Each leap in transistor design brought two miracles:

- Miniaturization — more transistors could fit into smaller chips.

- Efficiency — each consumed less power and generated less heat.

This is the principle known as Moore’s Law, coined by Intel co-founder Gordon Moore in 1965, predicting that the number of transistors on a chip would double roughly every two years. That prediction has held—astonishingly—through most of the past six decades, turning computing progress into a self-reinforcing rocket ride.

Consider the numbers:

- Apollo-era computers: thousands of transistors

- 1990s PCs: tens of millions

- Modern CPUs: tens of billions

- Experimental AI chips today: hundreds of billions, approaching atomic limits

We’ve gone from soldering individual transistors by hand to printing them as nanoscale patterns on a silicon wafer thinner than a fingernail.

From Mainframes to Moonshots

When the Apollo missions flew, computing power lived in windowless rooms filled with humming racks of equipment. Engineers typed commands into terminals and waited for results printed on paper tape. Today, astronauts aboard the Orion spacecraft will fly with on-board systems capable of:

- Real-time navigation using multiple redundant computers

- Autonomous course correction algorithms

- AI-assisted mission diagnostics

- High-resolution video capture and data streaming

That last point alone is mind-blowing: Artemis II’s crew will beam ultra-high-definition video from lunar orbit using laser communications managed by software running millions of lines of code—a leap in data and complexity that would have been unthinkable in 1969.

Yet, this isn’t just a story about faster chips. It’s about trusting machines with more responsibility. The astronauts of the Apollo era flew their ships manually with assistance from the AGC; Artemis II’s crew will collaborate with onboard systems that think and react in real time.

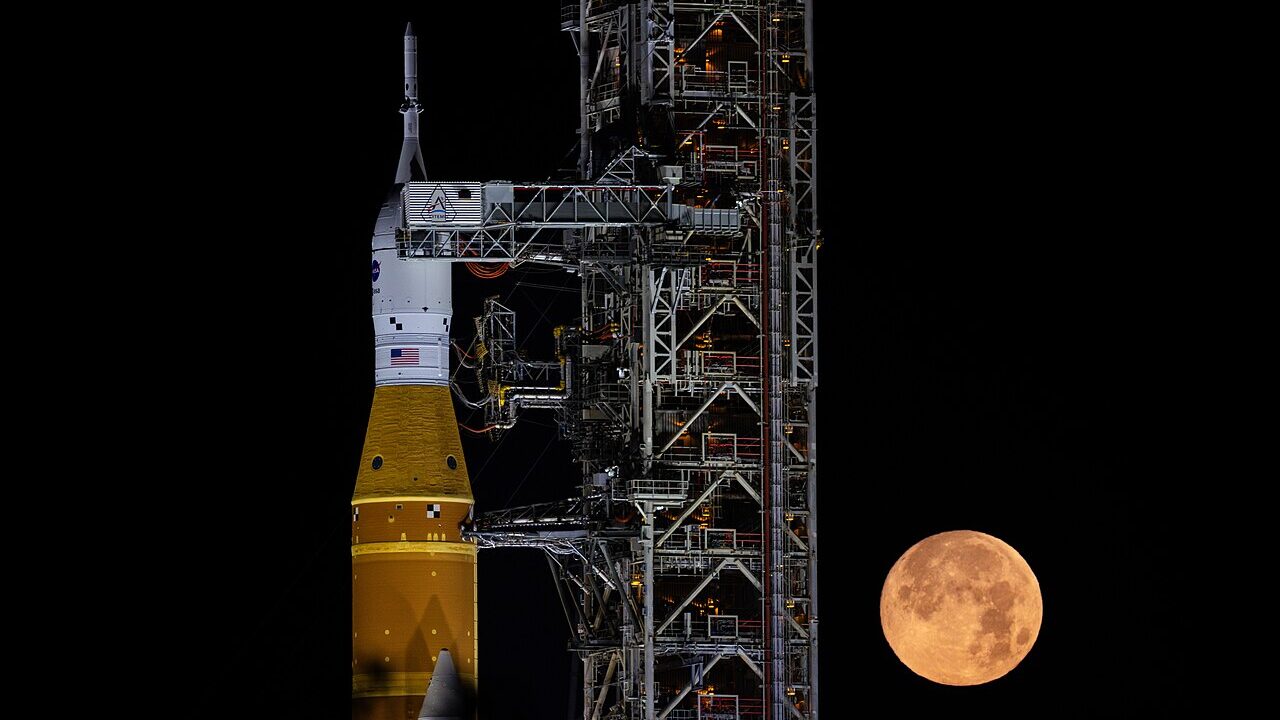

Artemis II: A Milestone Worth Celebrating

Set for launch from Kennedy Space Centre, Artemis II is NASA’s first crewed mission of the Artemis program, a crucial step toward returning humans to the Moon. The team includes:

- Reid Wiseman, Commander, NASA

- Victor Glover, Pilot, NASA

- Christina Koch, Mission Specialist, NASA

- Jeremy Hansen, Mission Specialist, Canadian Space Agency

This diverse crew represents not just two nations but a new era of space exploration. While Apollo spoke to the Cold War’s competitive spirit, Artemis stands for collaboration and continuity — building the infrastructure to sustain a human presence beyond Earth.

And behind that effort isn’t just rocket fuel; it’s silicon. Millions of transistor-level calculations will monitor propulsion, power, navigation, and life-support systems all in real time. In a sense, the transistors in your smartphone have gone cosmic: their descendants now form the backbone of humanity’s next great voyage.

What This Means for Us on Earth

It’s tempting to think of such computing progress as something abstract or distant, useful only to astronauts or engineers. But the same forces driving Artemis II power our everyday lives.

- Miniaturization fuels innovation. The same transistor physics that enable spaceflight also enable wearable health monitors, electric vehicles, and AI assistants.

- Efficiency translates to sustainability. Making chips smaller and smarter reduces energy use, a hidden victory for both tech companies and the planet.

- Open collaboration accelerates progress. NASA’s integration of commercial and international partners mirrors the trend in computing itself — open-source hardware, collective research, and interdisciplinary design.

In short, every breakthrough in computing architecture ripples through society, from orbit to office.

Where We Go Next

The next frontier isn’t just the Moon, it’s computing beyond silicon. As engineers approach atomic-scale limits, new materials like graphene, quantum semiconductors, and photonic chips promise even greater leaps in performance. These could power the crewed missions of Artemis III and eventually Mars, where autonomy will be even more critical.

In that sense, Artemis II isn’t merely a spaceflight milestone. It’s a time capsule of technological evolution — carrying with it the invisible legacy of every transistor that shrank, every processor that sped up, and every programmer who made machines think faster than before.

Because while rockets grab the headlines, it’s often the computers quietly humming beneath the cockpit panels that make history possible.

Your Turn: Reflect on the Power in Your Pocket

As the Artemis II countdown continues, take a moment to look at your smartphone — that sleek rectangle of glass and silicon. It contains billions of transistors working in harmony, each switching faster than the blink of an eye, each a distant descendant of the circuits that once guided Apollo 11 to Tranquility Base.

Humanity’s journey to the Moon is a story about courage, teamwork, and exploration — but it’s equally a story about computation. Our machines have evolved from helpers to copilots, and soon, perhaps, to autonomous explorers themselves.

So this week’s thought experiment is simple:

If your phone already outmatches Apollo’s computers by a millionfold, what could the computers of 2069 — a century after the first Moon landing — make possible?

Stay curious, stay grounded, and maybe, look up — the next great leap starts with the code we write here on Earth.

Your voice counts! Leave a comment and let us know what you think